Coordinate ascent MORE with adaptive entropy control for population-based regret minimization

In Proceedings of the Genetic and Evolutionary Computation Conference Companion, Lille, France, 2021

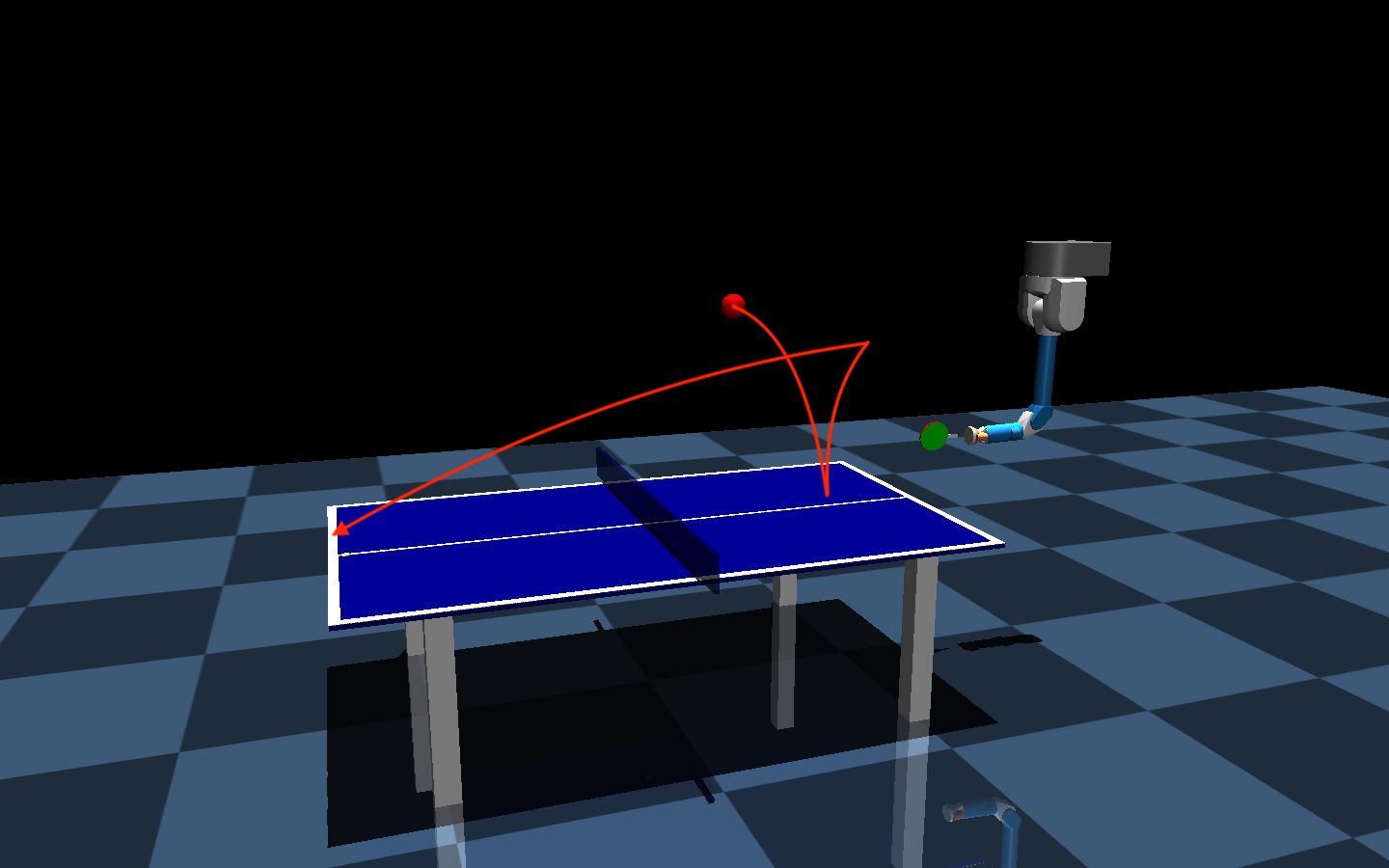

Model-based Relative Entropy Policy Search (MORE) is a population-based stochastic search algorithm with desirable properties such as a well defined policy search objective, i.e., it optimizes the expected return, and exact closed form information theoretic update rules. This is in contrast with existing population-based methods, that are often referred to as evolutionary strategies, such as CMA-ES. While these methods work very well in practice, the updates of the search distribution are often based on heuristics and they do not optimize the expected return of the population but instead implicitly optimize the return of elite samples, which may yield a poor expected return and unreliable or risky solutions. We show that the MORE algorithm can be improved with distinct updates based on coordinate ascent on the mean and covariance of the search distribution, which considerably improves the convergence speed while maintaining the exact closed form updates. In this way, we can match the performance of elite samples of CMA-ES while also showing a considerably improved performance of the sample average. We evaluate our new algorithm on simulated robotic tasks and compare to the state of the art CMA-ES.

Robust Black-Box Optimization for Stochastic Search and Episodic Reinforcement LearningJournal of Machine Learning Research, 2024

Robust Black-Box Optimization for Stochastic Search and Episodic Reinforcement LearningJournal of Machine Learning Research, 2024